Award-Winning Technology

A series of awards acknowledges the groundbreaking innovations of the rasdaman team over the years; these are the most recent ones:

|

DIN Innovator Award 2019.

German standardization body, DIN, has recognized Peter Baumann's contribution of datacubes to the Big Earth Data challenges with this year's Innovator Award.

This prestigious European award recognizes rasdaman for its innovation, its advancement of data science in industry and business, and as such also its outstanding business impact.

|

|

US-based TechConnect has recognized rasdaman's technological leadership with its prestigious innovation award, presented at the Boston event in June 2019.

According to TechConnect, "rasdaman heralds a new generation of services on massive, distributed spatio-temporal data".

|

|

The prestigious European DatSci&AI Award in 2019 has recognized rasdaman for its innovation, its advancement of data science in industry and business, and as such also its outstanding business impact. The rasdaman team is proud to be finalist in this line-up of hi-tech.

|

|

NATO Defence Innovation Challenge awards rasdaman as the only product in the Data Science category

As every year, the NITEC symposium - organised by the NATO Communications and Information Agency, NCIA - saw a massive lineup of industrial and academic excellence.

For its open-source analytics capabilities rasdaman received the annual Innovation Challenge Award in the category Data Science, next to the categories Mobile apps, Data visualization, and IoT.

The annual Innovation Challenge Award is open to actors operating at the cutting edge of technology from all 29 NATO Nations; this year's challenge focused on digital innovation.

This technology scouting is part of a large initiative: "We are seeking to broaden engagement with innovative technology drivers as NATO undergoes its largest technological modernization in decades", said NCI Agency General Manager Kevin J. Scheid.

|

|

US Magazine CIO Review picks rasdaman into its list of 100 Most Promising Big Data Solutions

Published from Fremont, California, CIOReview is a print magazine that explores and understands the plethora of ways adopted by firms to execute the smooth functioning of their businesses. A distinguished panel comprising of CEOs, CIOs, IT VPs including CIOReview editorial board finalized the “100 Most Promising BigData Solution Providers 2016” in the U.S. and shortlisted the best vendors and consultants.

"It’s a delightful experience to announce Rasdaman as one of the 100 Most Promising BigData Solution Providers 2016," said Jeevan George, Managing Editor of CIOReview. "Rasdaman is recognized for providing highly innovative products that helps to store and query massive, multi-dimensional array of data into the data base, which brings new dimension to the industries in the Big Data arena."

|

|

(image © Jan Kobel)

|

Copernicus Masters: Winner, Big Data Challenge [press release]

On October 23, 2014 the rasdaman team is announced winner of the Big Data Challenge of the Copernicus Masters Earth Monitoring Competition. T-Systems International GmbH has awarded the prize to rasdaman as best Copernicus service that makes use of big data analytics technologies to provide Earth observation intelligence on demand via a user-oriented web portal and mobile devices (right: award gets handed out to Peter Baumann (middle) by Thorsten Rudolph Anwendungszentrum GmbH Oberpfaffenhofen (left) and Markus Lennartz, Vice President Global Accounts / International Business, Public Sector, T-Systems International GmbH (right)).

|

|

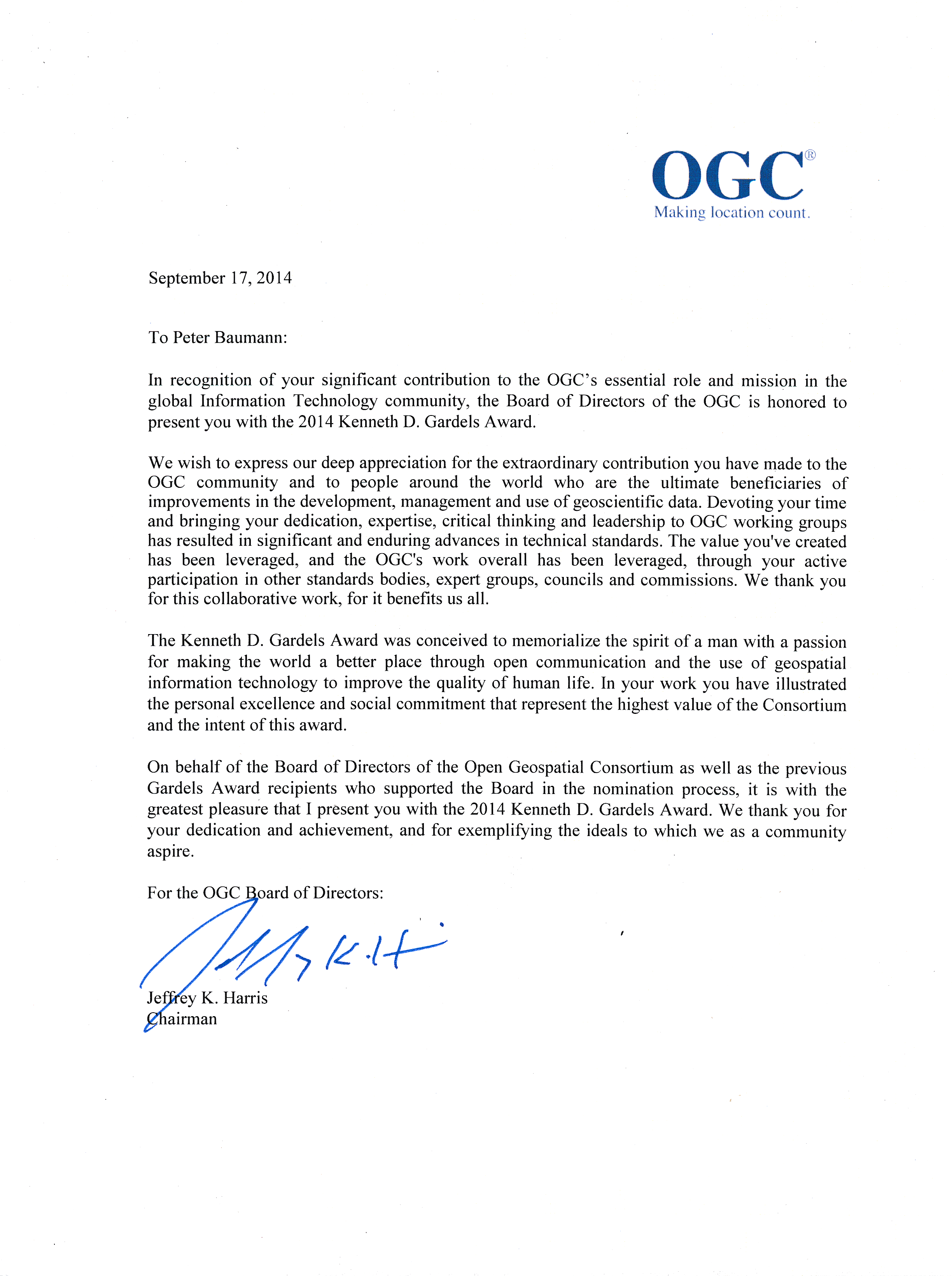

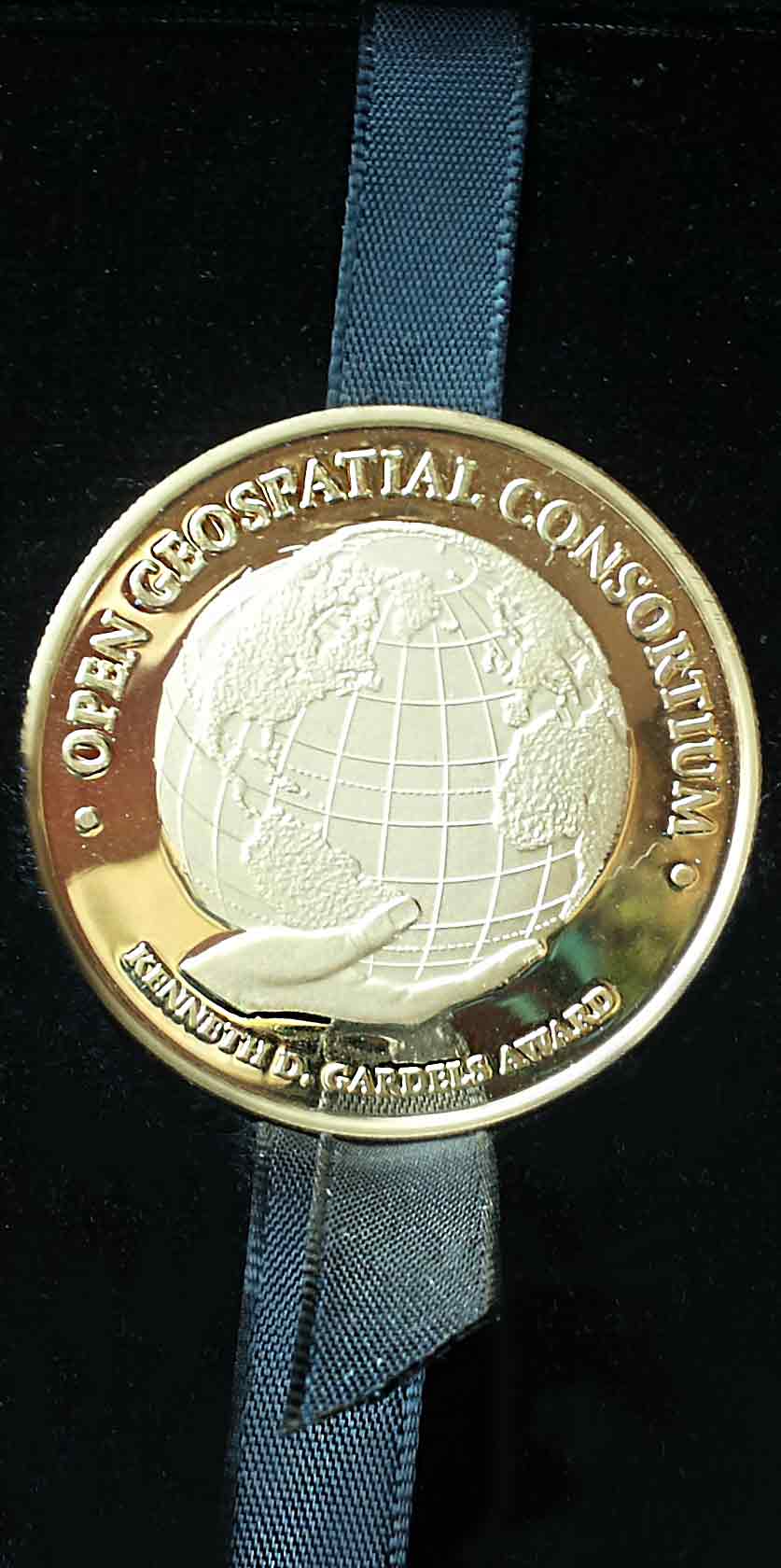

OGC Kenneth Gardels Award [press release]

On September 17, 2014 Peter Baumann receives OGC's prestigious 2014 Kenneth Gardels Award. From the laudatio: We wish to express our deep appreciation for the extraordinary contribution you have made to the OGC community and to people around the world who are the ultimate beneficiaries of improvements in the development, management and use of geoscientific data. Devoting your time and bringing your dedication, expertise, critical thinking and leadership to OGC working groups has resulted in significant and enduring advances in technical standards. The value you've created has been leveraged, and the OGC's work overall has been leveraged, through your active participation in other standards bodies, expert groups, councils and commissions. We thank you for this collaborative work, for it benefits us all.

|

|

NASA WorldWind Europe Challenge winner

During FOSS4G Europe 2014, the first pan-European open-source geo conference,

the rasdaman team got awarded the crystal bull symbolizing the

NASA WorldWind Europe Challenge.

This annual award solicits technology worldwide that considers the INSPIRE Directive

and uses NASA's open source virtual globe technology, World Wind, for providing societal benefit.

The rasdaman team has won the 2014 challenge by first-time showing an integration

of the rasdaman Big Data engine with the WorldWind virtual globe

while leveraging the OGC Web Coverage Processing Service (WCPS) geo query language on massive spatio-temporal datacubes.

The concrete application scenario was atmospheric data analysis by determining aerosol optical properties.

|

|

Geospatial World Forum Innovation Award

Photo showing how the award gets handed out to Peter Baumann and Wim Jansen, European Commission Project Officer

|

|